Right-size Your Work for Better Predictability

Originally published May 2026

Many teams spend significant time trying to improve predictability. Work is estimated, refined, discussed, and broken into smaller pieces, yet delivery timelines still feel inconsistent. Some items move quickly while others unexpectedly linger. Even work that appears similar on the surface can produce dramatically different outcomes.

When work enters a delivery system, it moves through a network of interconnected activities, policies, people, and feedback mechanisms that together enable value delivery. This includes workflow design, dependencies, communication paths, approvals, controls, handoffs, and the ways decisions are made throughout the flow of work. Every delivery system contains variability and uncertainty, yet teams often plan as though workflows are stable and predictable.

The Value of Right-sizing

Knowledge work rarely moves in a perfectly predictable way, especially in environments involving discovery work, AI initiatives, experimentation, or highly collaborative efforts. Dependencies emerge unexpectedly, reviews take longer than anticipated, priorities shift, and interruptions occur. Handoffs and approvals introduce delays that are often difficult to anticipate upfront. Because of this, it becomes important to understand how large a work item can reasonably be within the variability of the system.

This is where right-sizing comes into play. Right-sizing is the act of appropriately sizing work so it can deliver value within a reasonable timeframe given the variability of the system. Rather than attempting to eliminate uncertainty through increasingly detailed estimation, right-sizing accepts that variability and uncertainty are inherent characteristics of knowledge work. This shifts teams away from deterministic thinking and toward probabilistic thinking. Instead of demanding certainty where certainty does not exist, teams begin using data to better understand likelihood, risk, and system behavior.

Cycle Time and the Anatomy of a CTD Chart

A key part of this approach involves understanding how work behaves in the system, which begins with cycle time. Cycle time is the amount of elapsed time from when work begins until it is completed. Unlike estimation, which attempts to predict effort before work starts, cycle time reflects observed reality after work has flowed through the system. When teams collect cycle time data across many completed work items, patterns begin to emerge. Over time, these completion times form a distribution that can be used to forecast completion likelihoods and help determine whether work is appropriately sized. Most importantly, this distribution reflects the actual behavior of the system.

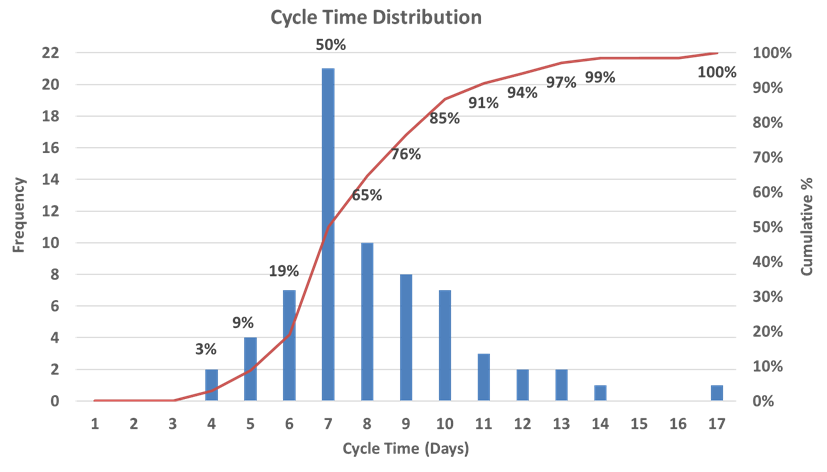

A Cycle Time Distribution (CTD) chart visualizes this information (see image below). The horizontal axis represents cycle time, typically measured in days. Moving from left to right means work items are taking longer to complete. The left vertical axis represents frequency, showing how many work items completed at each cycle time. The right vertical axis represents cumulative frequency, showing the percentage of work completed within a given number of days.

A Cycle Time Distribution (CTD) chart visualizes how long work items take to complete across a workflow, helping teams better understand variability, risk, and delivery predictability.

Once the chart is created, patterns in system behavior become much easier to understand. For example, the chart shows that 50% of work items complete in seven or fewer days, while 85% complete in ten or fewer days. This creates a far more informative picture of delivery performance than relying solely on estimates. Instead of debating how large work “feels,” teams can use actual system data to understand delivery likelihoods and make better decisions about how work should flow through the system.

Let’s Right-size

Now the cycle time data becomes actionable. Suppose a team wants work items to complete in ten or fewer days, and their CTD chart shows the system completes work within that timeframe 85% of the time. When a new work item is discussed, the question becomes: “Does this appear capable of being completed within ten or fewer days given how the system typically behaves?” If the answer is yes, the item is likely appropriately sized. If the answer is no, the work item may need to be split, simplified, or restructured before work begins.

This is the essence of right-sizing. The goal is not to estimate effort with increasing precision. The goal is to shape work so it better aligns with the demonstrated behavior of the system. This differs significantly from approaches such as story points and velocity. Story points measure relative size without considering how the delivery system itself behaves. A CTD chart, on the other hand, uses actual system performance to help shape work around realistic delivery expectations.

Service Level Expectation (SLE)

Once a team understands its cycle time distribution, it can begin establishing a Service Level Expectation (SLE). An SLE is a probabilistic expectation of delivery time based on historical system performance. It allows teams to communicate delivery expectations with clients, stakeholders, leadership, and one another using actual system data. Within the Kanban community, the 85th percentile is commonly used because it balances predictability with realism. It captures most of the distribution while still recognizing that some variability will always exist. However, any probabilistic expectation can be used for an SLE.

As teams begin thinking probabilistically, conversations about sizing and forecasting change significantly. Instead of debating whether work “feels” like a certain size, teams begin asking whether work appears capable of flowing through the system within the expected timeframe.

Final Thoughts

Variability cannot be eliminated from a workflow, but it can be understood and managed. Cycle Time Distribution charts make variability visible. Percentiles translate that variability into meaningful delivery expectations. Right-sizing then uses those insights to shape work in ways that better align with how the system actually performs.

Predictability is not about achieving certainty. It is about understanding the system well enough to make informed decisions in the presence of uncertainty. When teams begin to see those patterns clearly, predictability stops feeling like luck and starts becoming something they can actively improve.